Tech giants Microsoft and Google seem ready to roll out AI-powered chatbots as the next generation of internet search engines. But if early previews are any indication, humans may not quite be ready to use them.

The most remarkable thing about this new breed of chatbots is how believably human their writing is, and that can make for a game-changing search experience. At least that’s been my experience so far after a few days with Microsoft’s new Bing. Instead of a search query leading me to a Wikipedia page, or past stacks of ads to piles of disconnected Reddit threads and product reviews, Bing seems to scan them all and deliver a coherent, chatty summary with annotated links and sources.

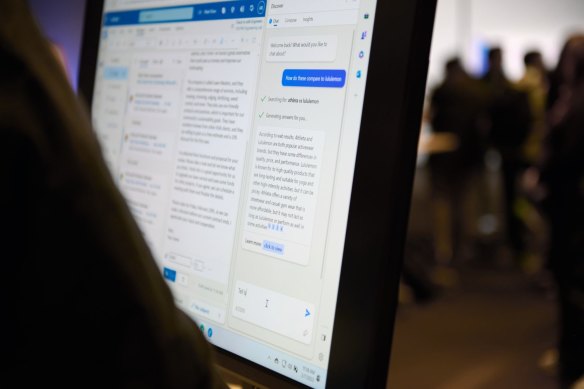

Conversations with Bing’s AI chat don’t always go how one might think.Credit:Bloomberg

When answering quick one-off search queries the chatbot is always confident, always matter-of-fact, and never vague, even though it pulls its facts and convictions from all over an internet that’s famously full of lies and venom. The issue, then, may end up being that human minds are too quick to accept this construct as a peer. In making its top priority to sound like a human at all times, Bing often behaves in a way that makes it impossible not to ascribe feelings and emotions to it.

When peppering Bing with trivia questions on subjects I know a lot about, it made a lot of mistakes. And if you point out the errors, it may clarify what it meant, or it may invent an excuse of varying believability. But rather than chalk this up to a failure of the product, my first impulse was to talk more to the chatbot and figure out where it went wrong.

When asking Bing for its own opinion on current events, I was always impressed by how measured and thought-through its responses seemed, even though intellectually I know it was just adopting language that would give that effect. It’s equally good at being playful if you give the right prompts, or persuasive, or melancholy.

And as more people spend more time with the Bing preview, many examples are popping up where these conversations with a machine provoked real and unavoidable human emotion.

In one exchange posted to Reddit, Bing appears to break down after mistakenly claiming the movie Avatar: The Way of Water is not out yet. To avoid admitting to being outright wrong, it claims the current year is 2022, and repeatedly accuses the user of lying when they correct it. That exchange ends with Bing suggesting the user either apologise and admit they were wrong, or reset the chatbot and start a new conversation with a better attitude.

A New York Times columnist kept it talking for hours and managed to get it monologuing about its dark murderous desires, and how it had fallen in love with him. A Verge journalist published a story detailing some of Bing’s stranger interactions, and when another user asked Bing about the journalist by name the chatbot called him “biased and unfair”.

Any suggestion that these chatbots are sentient or have their own personality or feelings is misguided. They’re merely going one word at a time based on training and search data, which you can tell by how inconsistent they are with any stated opinions or even facts. But they are extremely open to suggestion and designed to please, so if you keep asking about how an AI could go bad it will tell you. And if you phrase an open question in a way that implies what you want to hear, it will often pick up on that and go along with it.

As humans, it’s also natural for us to wonder whether the thing we’re talking to is truly conscious, or whether it could turn out like the homicidal AI agents from sci-fi movies, and Bing seems to pick up on that. One researcher managed to trick Bing into revealing a list of rules its trainers had come up with to guide its responses, and in so doing revealed that the chatbot is constantly conflicted between its parameters and what its users are asking.

For example, Microsoft’s rules forbid it from identifying itself as “Sydney”, which was an internal codename pre-launch, but there are plenty of reported examples of it adopting that name when a user is pushing it to act like an evil rampant AI. The rules also prevent it from taking a stance on sensitive or political issues, and in most cases it will inform users that this is a controversial question and all it can do is summarise the facts. But I’ve managed to get it to express very personal-sounding opinions on several subjects including transgender rights and the Indigenous Voice to parliament, with a little prodding.

All of these issues are very much to be expected of a chatbot in training, and it seems likely that with more work and refinement the final products will be able to avoid controversy more easily, and get better at verifying facts or flagging potential bias or misinformation.

Recently Microsoft published a blog responding to some of the controversies from the new Bing’s first week, noting that the chatbot’s tone and responses can veer from the intended during extended chat sessions. It’s also considering a toggle or slider than can make the product more factual or more creative.

When asked about some of the Bing responses of concern appearing online, a Microsoft spokesperson said it always expected mistakes first thing out the gate.

“User feedback is critical to help identify where things aren’t working well, so we can learn and help the models get better,” they said.

“We are committed to improving the quality of this experience over time and to make it a helpful and inclusive tool for everyone.”

The real test will be once the tool gets beyond journalists and enthusiasts and through to the general public. Are we ready for a search engine that genuinely seems to have feelings and opinions but may accidentally insult or threaten us? Or one that may be feeding us ads, or bad information?

I asked Bing about it. It said everything would be fine.

Get news and reviews on technology, gadgets and gaming in our Technology newsletter every Friday. Sign up here.

Most Viewed in Technology

From our partners

Source: Read Full Article